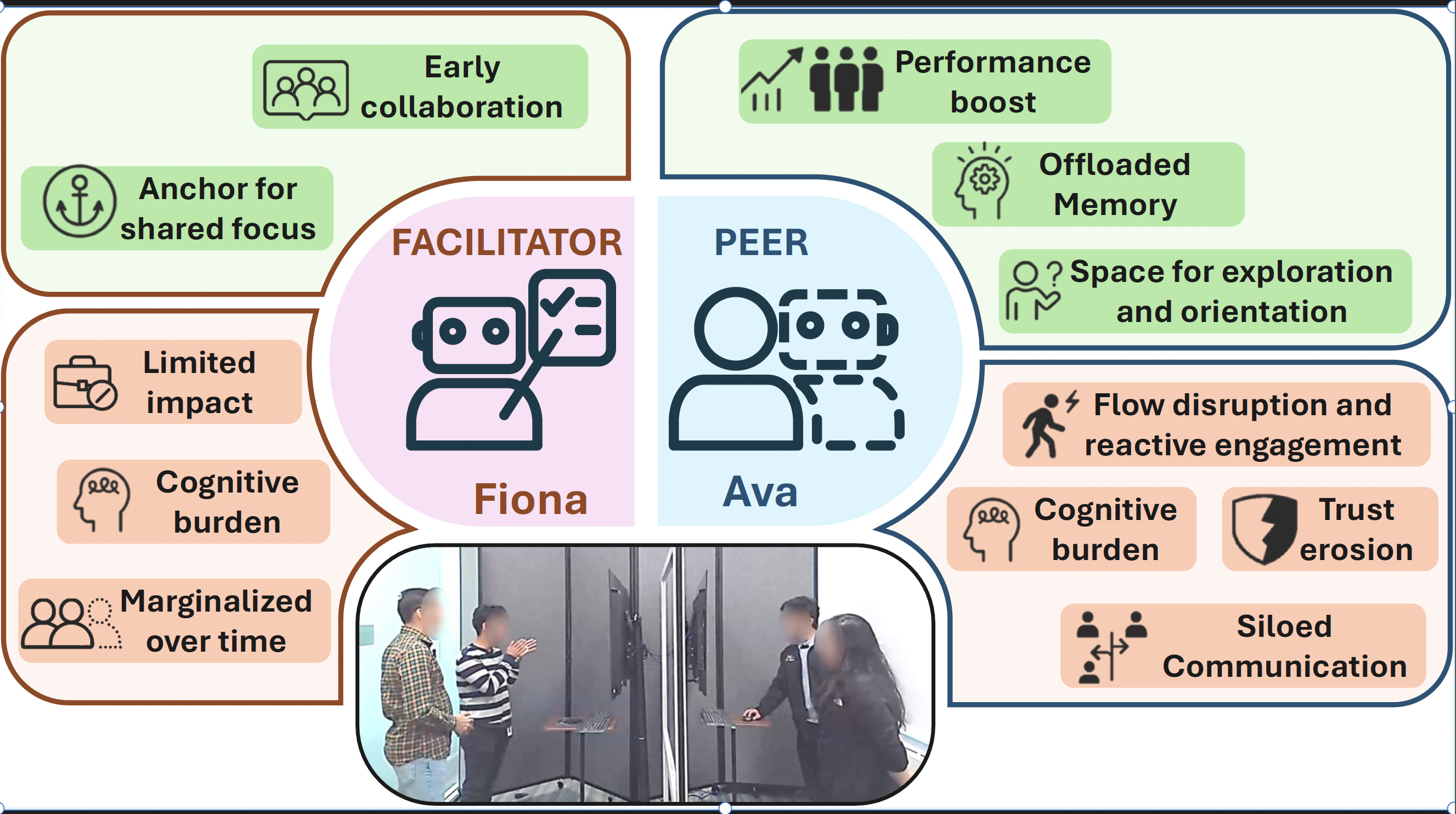

Fiona, the facilitator

Process-focused AI support

- Suggested collaboration strategies

- Gave time reminders

- Generated periodic summaries of team discussion

How should AI participate in human teamwork: as a facilitator that guides the group, or as a peer that contributes ideas?

This project focuses on the interaction cost of AI in teamwork: when support is helpful, when it becomes disruptive, and how role design changes collaboration quality.

Most AI systems still behave like tools. They wait for prompts, answer questions, and stay at the edge of collaboration.

But real teamwork is dynamic. Teams coordinate, split work, get stuck, recover, and rethink. I wanted to understand what happens when AI becomes part of that process.

In this project, I designed and studied two proactive AI roles for co-located collaborative problem-solving: a facilitator that supported coordination and reflection, and a peer that contributed ideas, hints, and follow-up support.

I built the system, designed the study environment, and ran a mixed-methods evaluation with 24 participants. The work was published at ACM CHI 2026.

Two proactive AI teammates were designed to test contrasting roles in the same collaborative task.

Teams solved time-sensitive collaborative problems in a controlled yet socially dynamic environment.

This project was conducted in collaboration with Honda Research Institute US during my internship. The work brought together research questions about human-AI teamwork, product-facing questions about proactive behavior, and practical implementation decisions for the AI probes.

That collaboration shaped the study into something both rigorous and future-facing: not just whether proactive AI can work, but how different participation styles might translate into real collaborative systems.

I defined the core UX question around proactive AI roles in teamwork and translated it into two comparable AI probes.

I built the system, designed the collaborative study environment, and created the task flow needed for a within-subjects comparison.

I ran the evaluation, captured behavioral and self-report evidence, and connected qualitative interpretation with team outcomes.

I synthesized the findings into design principles for how collaborative AI should time, frame, and adapt its participation.

The objective was not to build a final product. It was to create two clearly different AI participation styles and evaluate how each changed collaboration.

From a UX research perspective, the project focused on role design, interaction cost, team dynamics, and legibility. The core question was whether proactive AI should guide the team as a facilitator or join the work as a peer.

Most collaborative AI today is reactive. Someone in the group has to pause, prompt, interpret the result, and bring it back into the conversation. That interaction cost matters in fast, shared work.

So I asked a more specific UX question: should AI behave like a facilitator, helping the group coordinate and reflect, or like a peer, offering ideas and problem-solving support as an equal participant?

Process-focused AI support

Task-focused AI support

The goal was not to build a final product, but to instantiate two clearly different ways AI could participate in teamwork.

I used digital escape rooms because they naturally create time pressure, distributed information, shared attention demands, and fast coordination cycles. That made them a strong setting for observing how proactive AI affected real team interaction.

The study combined behavioral observation, real-time transcripts, surveys, and focus group interviews. This made it possible to compare not only task outcomes, but also how teams felt, coordinated, and adapted over time.

The study interface and probe behaviors helped surface how teams interpreted, used, ignored, or pushed back on proactive AI participation over time.

Ava sometimes gave timely hints, supported memory offloading, and opened space for exploration. But it also increased workload, disrupted flow, and sometimes pulled people into side conversations with the AI.

Fiona often faded into the background, especially when teams felt the summaries were repetitive. Yet teams performed best overall in the facilitator condition. That revealed an important design tension between felt usefulness and actual team support.

Some teams relied on AI and later became more reflective. Others became dependent and then frustrated. This suggested that collaborative AI should adapt over time rather than hold a fixed role.

Too much intervention increases cognitive load, even when the AI is technically helpful.

AI can disrupt communication even when its ideas are good, so social fit matters as much as output quality.

People need enough rationale to evaluate confident AI suggestions and stay appropriately critical.

Early-stage structure and later-stage idea support may require different AI behavior within the same workflow.

This work was published at ACM CHI 2026 and conducted in collaboration with Honda Research Institute US.

More importantly, it helped me articulate a product-level insight I now carry into my broader UX research work:

The best collaborative AI is not the AI that speaks the most. It is the AI that knows when, how, and why to participate.

Research takeaway