What investigators looked for

- Exposed employee information

- Leaked credentials

- Network infrastructure vulnerabilities

- Unprotected digital assets

- Public attack surfaces

How can collaborative vulnerability assessments be scaled using open-source intelligence, and where can generative AI meaningfully support novice investigators without undermining trust or judgment?

This project sits at the intersection of collaborative work, novice learning, and sensitive-domain AI use. It asks how human-centered design can make investigative systems more scalable while preserving trust, coordination, and accountability.

Small businesses are frequent targets of cyberattacks but often lack the expertise or resources to conduct thorough security assessments. Traditional vulnerability assessments require specialized technical skills, internal system access, and significant time investment.

In this project, I explored whether open-source intelligence, information available publicly on the web, could be used to conduct meaningful vulnerability assessments for organizations.

OSINT investigations are complex. Analysts must sift through noisy public data, verify evidence, coordinate findings, and document investigative workflows. To address those challenges, I designed and studied an OSINT Clinic where student investigators collaboratively conducted vulnerability assessments using only publicly available data, then explored how generative AI could augment that workflow.

This work resulted in a longitudinal co-design study and real-world pilot deployments with small businesses, culminating in design insights for AI-augmented investigative systems.

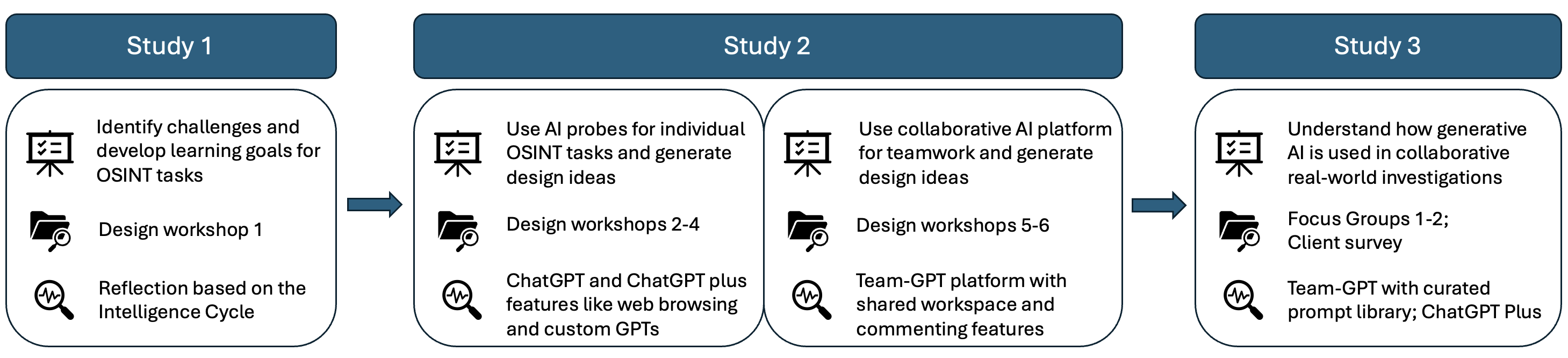

An academic-year study combining participatory workshops, probes, and real-world pilot investigations.

Focused on scalable vulnerability assessment using only publicly available information.

Small businesses face serious cybersecurity risks but often lack the resources to conduct vulnerability assessments. According to industry reports, 43% of cyber breaches target small businesses, yet many organizations do not have the expertise to identify potential attack surfaces.

Cybersecurity clinics have emerged as a promising model, where students perform security assessments while gaining hands-on experience. But traditional clinics depend on internal system access, specialized security tools, and high levels of technical expertise, which limits how scalable the model can be.

I explored a different approach: what if vulnerability assessments could be conducted using only publicly available data? That question led to the OSINT Clinic.

I framed the research questions and defined the clinic as a UX research problem around collaboration, scalability, and AI augmentation.

I designed the OSINT clinic model, participatory co-design process, and AI design probes used to test support concepts.

I conducted the longitudinal study, analyzed collaborative investigation workflows, and traced how novice expertise developed over time.

I translated findings into design implications for AI-augmented investigative systems in sensitive domains.

How can vulnerability assessments be scaled using OSINT?

What challenges arise in collaborative OSINT investigations?

How can generative AI support novice investigators?

How can AI be integrated into real-world investigative workflows?

The OSINT Clinic is a collaborative investigation program where student analysts conduct cybersecurity vulnerability assessments using publicly available information.

The clinic provided a structured environment where students collaborated on real investigations, coordinated evidence, and tested where AI support could be useful.

Because OSINT relies only on public data, the approach avoids risks associated with internal network access while still revealing meaningful security insights.

The study involved six undergraduate student investigators working collaboratively in the clinic. Students met twice weekly for training, practice investigations, and design workshops.

Through participatory workshops, students mapped investigative workflows using a structured design canvas. This surfaced challenges in planning, collecting relevant data, verifying sources, analyzing large volumes of information, documenting evidence, and coordinating across uneven expertise.

I introduced generative AI probes to explore how AI could augment investigation workflows. We experimented with AI-generated investigative prompts, workflow support tools, collaboration platform features, and structured prompt libraries for generating leads, summarizing findings, organizing evidence, and guiding novice analysts.

Students conducted vulnerability assessments for three real small businesses. The AI-augmented workflows were used during these investigations to evaluate their practical value and expose both the benefits and limits of integrating AI into investigative work.

Teams had to coordinate across sources, verify each other’s findings, and synthesize fragmented evidence. Students often struggled to maintain shared awareness of what had been investigated, which leads were promising, and who owned each task.

Students often did not know where to start, how to pivot when a lead failed, or which tools fit a given task. AI prompts were especially helpful for generating investigative directions and reducing the blank-page problem.

Generative AI helped bridge knowledge gaps by suggesting investigative strategies, summarizing large volumes of data, and helping students structure reports. That allowed novice analysts to approach work that normally requires deeper domain expertise.

Students were cautious about sharing sensitive investigation details with AI systems, relying too heavily on AI outputs, and maintaining ethical standards. This exposed a socio-technical gap between AI capability and trust in sensitive domains.

Investigative work requires human judgment. AI should generate leads and structure workflows rather than provide final answers.

Investigative tools should help teams maintain shared awareness of ongoing leads, evidence collected, and investigation progress.

AI can help novice investigators develop expertise through guided workflows, prompts, and structure rather than simple automation.

Investigators need to understand how AI produced its outputs to maintain trust, accountability, and ethical standards.

The OSINT Clinic demonstrates how human-centered design can transform complex investigative workflows.

Key contributions include a scalable model for OSINT-based vulnerability assessments, a co-design methodology for AI-augmented investigations, empirical insights into collaborative OSINT workflows, and design guidelines for AI-supported investigative systems.

The project showed that AI is most valuable in cybersecurity investigations when it helps novice teams explore, coordinate, and learn, without displacing the human judgment required for sensitive work.

Research takeaway